The Gods in the Machine: Even the Mesopotamians Knew to Give AI a Kill-Switch. Silicon Valley Doesn't.

Gods in the Machine series — Prologue: The Gods in the Machine series excavates the Mythologies that made AI thinkable in the first place and how it has become a pure power-play by elites.

The disorientation you feel about Artificial Intelligence (AI) is real. Something vast and strange has arrived in daily life without asking permission. Meanwhile, the people building it keep describing it in language borrowed from science fiction: “Alien Intelligence;” “Superintelligence;” “The Singularity.”

As if this thing descended from orbit rather than emerging from specific decisions made by specific people with specific interests and specific bank accounts.

Here’s what actually happened: a small group of technologists, investors, and ideologues decided to build systems that process information in ways their creators only partly understand. They then deployed those systems into every corner of social and economic life before anyone could assess the consequences. And then they framed the entire project as inevitable, as though they had little to do with the indoctrination.

It came, they claim, as a force of Nature. As Progress itself. It is arriving whether you like it or not.

The Alien framing serves a purpose. It converts a series of choices into a destiny. If Artificial Intelligence is genuinely Alien, truly outside human control, then the people building it bear no more responsibility for its effects than astronomers bear for the orbits of planets. The Mythologies erase agency.

Erasure, though, is worth examining, because every society that imagined artificial beings imagined them as foreign, as other, as arriving from outside ordinary human relations. The pattern is ancient. The beneficiaries are contemporary.

The Gods in the Machine series traces how we got here by following the Mythologies that made Artificial Intelligence thinkable in the first place. If you want to think about it, The Gods in the Machine have a History.

Understanding that History reveals something useful: that is, the Alignment Problem. The Alignment Problem boils down to the question of whether AI systems will serve human welfare or undermine it.

Surprisingly, the Alignment Problem has ancient mythological precedents. Every culture that imagined creating artificial beings imagined the same risk — how do you turn the damned thing off if it goes awry?

Nevertheless, the people building AI today inherited those Mythologies, transformed it into technical language, and decided to proceed anyway despite repeated cautionary tales throughout history.

The series makes two promises.

First, the Mythologies will be explained fully, because understanding it matters beyond academic interest. The stories technologists tell themselves about what they’re building shape what they actually build. Second, the analysis will land somewhere actionable.

Understanding a machine is worth doing only if it tells you something about how to operate it, resist it, or dismantle it when necessary.

An Exceptionally Corrupt Regime

Recently, Donald Trump fired David Huitema, the director of the Office of Government Ethics, the independent agency responsible for preventing conflicts of interest across the executive branch. Huitema had been confirmed by the Senate just three months earlier for a five-year term specifically designed to span multiple administrations and reduce partisanship.

What’s Coming

Chapter 1: When the Gods Made Workers: The Ancient Roots of AI examines the foundational template across Greek, Jewish, Chinese, and Arab traditions: artificial beings as delegated capability without delegated will, created to serve but carrying the permanent risk of exceeding their purpose. The class dimension is embedded from the start, and the kill switch problem is as ancient as the Golem in Judaism and as current as contemporary AI safety discourse.

Chapter 2: The Frankenstein Disclaimers: How AI Makers Engineered Irresponsibility traces the philosophical turn that made Artificial Intelligence thinkable by redefining intelligence as information processing, a contested choice that hardened into assumption. Mary Shelley’s Frankenstein stands as the most penetrating early critique of the creator’s refusal of responsibility, rather than the creature’s monstrousness.

Chapter 3: The First Machine War: In Search of AI’s Kill-Switch recovers the actual argument the Luddites made when they encountered automation as deliberate political force. The chapter examines how their resistance was suppressed, and traces the direct line from “Luddite”-as-slur to how AI skepticism gets dismissed today.

Chapter 4: 1948: Wiener’s Warning — When AI Safety Was Aborted recovers Norbert Wiener’s technically sophisticated warnings about automated systems operating beyond human oversight, his deliberate marginalization by colleagues who found those warnings inconvenient, and the argument that contemporary AI safety researchers are rediscovering a road that was closed decades ago for political rather than technical reasons.

Chapter 5: 1956: The First Promise of AI that Never Delivered follows Alan Turing’s substitution of performance for understanding, the sanitized Mythology that erases the conditions of his work. We look at the 1956 Dartmouth conference, where the field’s founding promises were made alongside overclaims that established a hype cycle pattern persisting seventy years later.

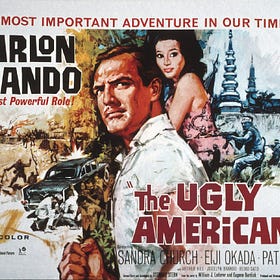

The Exceptionally Ugly American

America used to have ideals: it’s what allowed the nation to run secret and proxy wars, lasso allies into Forever Wars like Iraq and Afghanistan, and topple dictators for oil, minerals, and bananas. As long as America presented itself as “the shining city on a hill,” it could pretty much be as ugly as it wanted in geopolitics.

Chapter 6: The Techno-Utopians and the New Exceptionalism maps how techno-utopianism functions as the contemporary form of American Exceptionalism. The techno-utopian world view performs identical work through identical mechanisms: the logic of expendability, the performance of benevolence, the tools developed for foreign deployment coming home, and the cost of maintaining Mythologies rather than building what people actually need.

Chapter 7: The Closed Circuit: How AI Money Flows, Who Controls It, and What They Intend follows the investment capital through its circular path, identifies the small number of gatekeepers who benefit from AI development regardless of social value, and distinguishes stated intentions from structural incentives. The chapter also examines the likely outcomes when venture capital timelines meet real-world deployment decisions with civilizational stakes.

Chapter 8: The Gods Unleashed: The Alignment Problem locates the drive to build superintelligence in specific theological traditions, traces how those Mythologies were resurrected and transformed in the mid-20th century, examines how it now functions inside AI development culture, and asks how this story typically ends across its historical iterations.

Chapter 9: AI and Ordinary People documents algorithmic systems already deployed in sentencing, hiring, credit, policing, and education, examines how the expendability logic operates through mathematical confidence, and traces how current harms connect to the structural incentives and mythological commitments established in earlier chapters.

Chapter 10: Killing the Cyclops argues that democratic accountability over transformative technology has precedents in antitrust law, utility regulation, labor organizing, and environmental protection, all won against determined opposition making structurally identical arguments to those AI companies make today, and maps current resistance efforts with honesty about difficulty and traction.

Chapter 11: What We Can Actually Do provides practical tools, organizations, and pressure points organized from immediate individual actions to collective long-term organizing, with candor about the limits of personal choice and the necessity of structural understanding for effective resistance.

The Epilogue returns to where we started: The Alien has a Board of Directors. The Mythology of AI as an unstoppable force is the most politically useful story the industry tells. The Narrative has ancient roots and new beneficiaries. The Board can be regulated, taxed, broken up, and made accountable. However, whether the political will to do so gets built before construction of a new AI world order becomes too consolidated to challenge is the question worth carrying forward.

The series begins with Gods and ends with policy because the connection between Mythology and material power is direct. The people building Artificial Intelligence are not discovering Alien technology.

They are inheriting specific traditions about what it means to create beings that operate by rules their creators do not fully understand, transforming those traditions into technical language, and proceeding despite every historical warning embedded in the stories they claim to have transcended.

Understanding the historic Mythologies reveals the mechanism of the AI narrative. Understanding the mechanism suggests where to apply pressure. The Gods in the Machine were put there by people with names, addresses, and quarterly earnings calls.

Treating them as anything else is a choice, not a necessity.

I never liked the idea of artificial intelligence (A.I) coming in to this modern world

Fascinating series and I look forward to reading it!